What is Hadoop ? 🧿

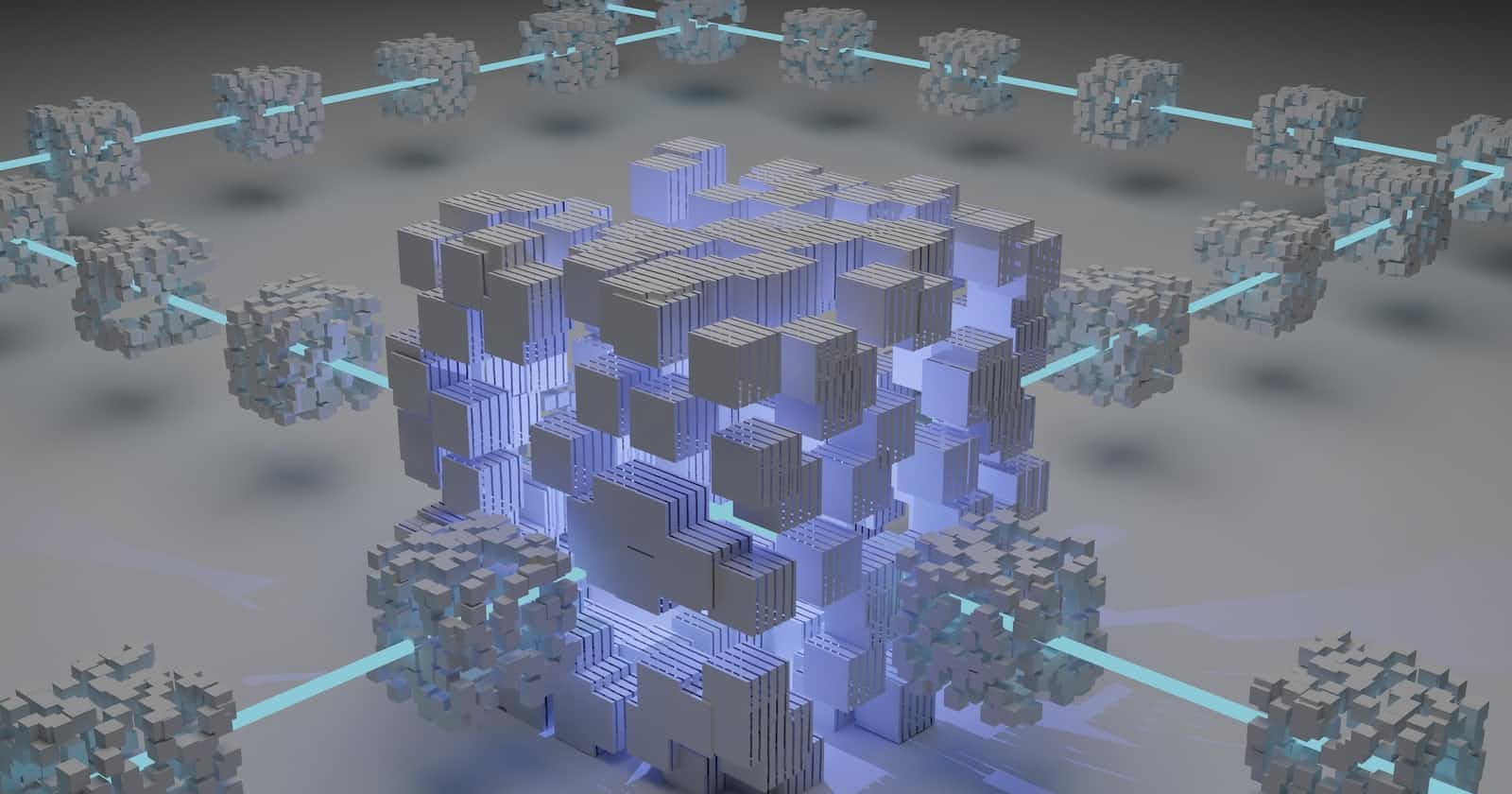

Hadoop is an Apache open source framework written in java that allows distributed processing of large datasets across clusters of computers using simple programming models. The Hadoop framework application works in an environment that provides distributed storage and computation across clusters of computers. Hadoop is designed to scale up from single server to thousands of machines, each offering local computation and storage.

Components of Hadoop 🧿

HDFS

Map Reduce

YARN

Hadoop Distributed File System

The Hadoop Distributed File System (HDFS) is based on the Google File System (GFS) and provides a distributed file system that is designed to run on commodity hardware.

MapReduce

MapReduce is a parallel programming model for writing distributed applications devised at Google for efficient processing of large amounts of data (multi-terabyte data-sets), on large clusters (thousands of nodes) of commodity hardware in a reliable, fault-tolerant manner.

YARN

Yet Another Resource Negotiator which is mostly used to manage the resources.

Challenges with Hadoop 🧿

Small File Concerns

Slow Processing Speed

No Real Time Processing

No Iterative Processing

Ease of Use

Security Problem